Gendered disinformation: 6 reasons why liberal democracies need to respond to this threat

Gendered disinformation is a form of identity-based disinformation that threatens human rights worldwide. It undermines the digital and political rights, as well as the safety and security, of its targets. Its effects are far-reaching: gendered disinformation is used to justify human rights abuses and entrench repression of women and minority groups.[1]

This policy briefing explains what gendered disinformation is, how it impacts individuals and societies, and the challenges in combating it, drawing on case studies from Poland and the UK. It assesses how the UK and EU are responding to gendered disinformation, and sets out a plan of action for governments, platforms, media and civil society.

1. Gendered disinformation is a weapon

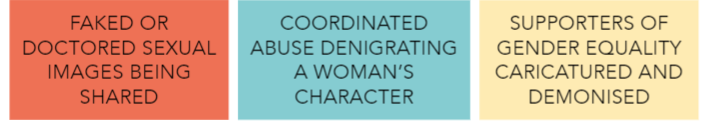

Gendered disinformation is manipulated information that weaponises gendered stereotypes for political, economic or social ends.[2] Examples of gendered disinformation include:[3]

A gendered disinformation campaign is one which is coordinated and targeted at specific people or topics. There are different ways campaigns originate and spread; they may draw on existing rumours or attacks and amplify them inorganically[4], or originate messages which authentic users outside of the campaign then disseminate.[5] Campaigns may be directly state-sponsored, share state-aligned messages, or be used by non-state actors.

Gendered disinformation is often part of a broader political strategy,[6] manipulating gendered narratives to silence critics and consolidate power.[7] It can also intersect with other forms of identity-based disinformation[8] , such as that based on sexual orientation, disability, race, ethnicity, or religion.[9]

2. Gendered disinformation threatens individuals

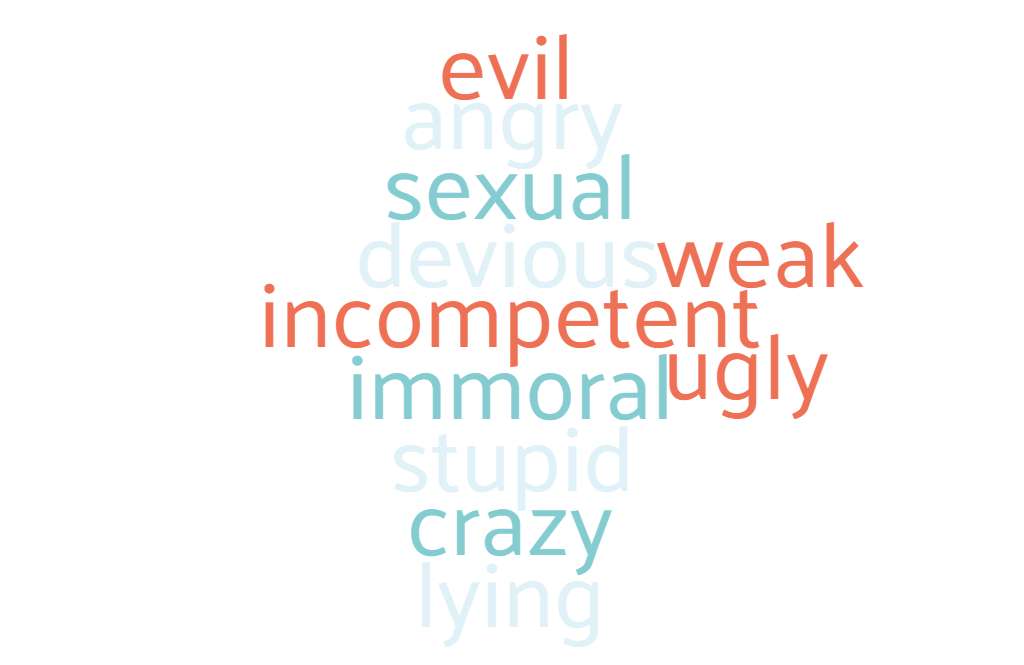

Gendered disinformation disproportionately affects people of the gender that it targets: making spaces, both online and offline, unsafe for them to occupy. It can be weaponized against anyone: but is often used against women in public life – 42% of women parliamentarians surveyed in 2016 said that they had experienced ‘extremely humiliating or sexually charged’ images being shared online.[10] Gendered disinformation commonly weaponises stereotypes such as women being devious, stupid, weak, immoral or sexualising them to paint them as untrustworthy and unfit to hold positions of power, a public profile or influence within society.[11]

The psychological toll it takes on its targets can be profound, and deprives them of the freedom to exist on social media that in today’s digitalised world is often crucial to people’s professional and personal lives.[12] And it is not only online violence that is a concern: the volume and nature of the threats and abuse means that personal safety is a concern, with those who are targeting women engaging in public scrutiny of everything from their appearance to their work and their private lives to alarming levels of detail and distortion.[13]

3. Gendered disinformation threatens democracy

Gendered disinformation fundamentally undermines equal participation in democratic life, and reduces the space for women to be involved in public life.[14] This in turn undermines the effectiveness, the equity, and the representativeness of democratic institutions.[15]

The reinforcement of negative gendered stereotypes online bolsters misogynistic attitudes to women’s role in the public sphere and in society.[16] And the cost to public life is significant. Women in particular are driven out of public life: those who are already in it leave, feeling it is no longer tolerable or safe for them[17],[18],[19],[20] – and those who remain often distance themselves from engaging with the public through social media.[21] Others are put off entering public life in the first place because they anticipate gendered abuse.[22] In the UK, Black women in public life are 84% more likely to experience online abuse than white women, and are likely to be disproportionately excluded from participating.[23]

4. Gendered disinformation threatens security and human rights

Gendered abuse acts as a vector for bad information.[24],[25] Gendered disinformation is a recognised tactic used by authoritarian actors (including in democratic states) to undermine threats to their power – whether that threat be an individual woman opponent, or a whole movement.[26] For instance, in Pakistan, women journalists who criticise the government are subject to false ‘fact-checking’ and resulting harassment.[27] In many cases, women’s rights, and the rights of LGBT+ people, are depicted as threats to order and national identity and used to foster social divisions, often as part of a wider assault on human rights.[28],[29],[30],[31]

And disinformation can also act as a vector for gendered hate.[32] The rise of incel and far-right communities online feed the stereotypes that women have hidden malicious intentions or are using their sexuality to manipulate men – narratives which then move onto mainstream platforms and gain traction, stoking the hate directed against women in public life.[33] And increasingly extremist gendered disinformation risks legitimising offline violence against women. In the US, online conspiracy theories have fuelled plots to kidnap a female governor.[34] Far-right extremism is a growing threat in the UK[35]: the MP Jo Cox was murdered in 2016 by a far-right extremist,[36] and MPs have spoken out about the continuing threats they receive, including threats of sexual violence.[37],[38] Women journalists in Afghanistan have been killed and threatened, with Human Rights Watch linking this targeting to women journalists ’challenging perceived social norms’.[39] In Brazil, Marielle Franco, a city councillor in Brazil and a critic of the state, was murdered, following which a disinformation campaign about her life circulated widely online.[40],[41],[42],[43]

By reconceptualising women or LGBT+ people as threats to the existence, morality or stability of the state, gendered disinformation risks making violent action taken to eliminate those threats seem permissible within public political discourse – gendered disinformation is a form of ‘dangerous speech’.[44],[45] It uses gendered stereotypes to dehumanise its targets – the ‘out-group’ of women or LGBT+ people – and builds solidarity amongst their attackers.[46],[47],[48] The results can be activists, journalists or politicians being arrested, detained, attacked or even murdered.

5. Gendered disinformation is parasitic, amplifies existing stereotypes and prejudices

Gendered disinformation gains its power by tapping into existing stereotypes and caricatures to evoke emotional responses,[49], gain credibility, and resonate with and encourage others to join in: the intended audience is not only those targeted but wider observers. Gendered disinformation attacking women will often use multiple different gendered narratives to undermine or denigrate their character[50]: particularly to depict women as malicious ‘enemies’, or as weak and useless ‘victims’.[51] Some classic tropes weaponized against women include labelling them as:[52]

‘Language matters’, and is a key instrument in the dissemination of gendered disinformation.[53] Disinformation campaigns in Poland have sought to weaponise and redefine gendered terms in public discourse, through equating the concept of ‘women’s rights’ with ‘abortion’ and ‘abortion’ with ‘murder’ and ‘killing children’.[54],[55] Those who support women’s rights and reproductive rights are attacked online by state-aligned disinformation as stupid, evil and hypocritical, with ‘feministka’ (feminist, diminutive) often used as a term of abuse.[56] Another commonly used narrative used by the state and public institutions is that ‘LGBT is not people, it’s an ideology’, from which children and wider society must be ‘protected’.[57]

The impact of these disinformation campaigns that seek to redefine terms is not simply to make people believe untrue things, but to implicitly permit and try to legitimate aggression towards and the limitation of rights of these groups.[58] Towns in Poland have declared themselves ‘LGBT-free zones’ where LGBT+ rights are rejected as an “aggressive ideology” and LGBT+ people face discrimination, exclusion and violence.[59],[60],[61] Women’s rights defenders, including those involved in the Women’s Strike protesting restrictions on abortion, have faced detention and threats, which they link to ‘government rhetoric and media campaigns aiming to discredit them and their work, which foster misinformation and hate’.[62] These assaults are exacerbated and legitimised by gendered disinformation.[63]

The same weaponised stereotypes that are common across gendered disinformation campaigns occur in UK political discourse online: that women are devious, evil, immoral, shallow or stupid.[64],[65] A woman MP was targeted while she was pregnant because of her pro-choice politics, with graphic anti-abortion posters being put up in her constituency and a website which called for her to be ‘stopped’.[66] Other cases include conspiracy theories being shared accusing a black woman MP of editing video footage and lying about racism that she faced,[67] and a fake BBC account ‘reporting’ a false interview with a party leader about sexism.[68],[69] Journalists in the UK targeted for their reporting have experienced misogynistic abuse online echoing these gendered tropes, in particular seeking to undermine their legitimacy and autonomy: using descriptions like ‘silly little girl’ or ’puppet’, making sexualised comments or threats, or attributing malicious motives to them.[70] Women in public life who focus particularly on women’s equality face similar attributions of malevolent intent, and their feminist motivations distorted or fabricated – such as facing accusations of seeking to ‘emasculate men’.[71]

6. Gendered disinformation can be difficult to identify – for humans and for machines

Although ‘falsity, malign intent and coordination’ are key indicators of gendered disinformation[72], identifying these factors in individual pieces of content is challenging and sometimes impossible.

Human observers may struggle to identify gendered disinformation, which will often appear more like online abuse than ‘traditional’ disinformation. Often disinformation is conceptualised as lies or falsehoods: but the bases of gendered disinformation are stereotypes and existing social judgements about ‘appropriate’ gendered attributes or behaviour, and so may not be straightforwardly false. It may use true information, misleadingly presented or manipulated, unprovable rumours, or simple value judgements (which cannot be classified as ‘true’ or ‘false’) to attack its targets.[73] When a campaign is inauthentic (e.g. through false amplification using paid trolls or bots) can also be difficult to definitively and rapidly identify. Gendered disinformation can evade human moderation: it uses in-jokes and coded terms only immediately understandable to people with a comprehensive understanding of the political context of the campaign and its targets: while global platforms fail to invest in this needed in-country expertise.[74],[75]

Technology has similar challenges. Gendered disinformation exhibits ‘malign creativity’, using different forms of media and ‘coded’ images and terms that seem innocuous or meaningless without context.[76] This may include using pictures of cartons of eggs to taunt women as being ‘reproducers’[77]; using nicknames or terms for someone that are only used within that campaign, or swapping the characters used in common terms of abuse (such as ‘b!tch’)[78]. As a result campaigns can evade automated detection and filters based on particular keywords or known images.[79] And gendered disinformation may not always appear to denigrate a certain gender, but still reinforces harmful stereotypes: disinformation weaponizing the view of women as ‘weak’ may co-opt the language of seeking to protect women, especially from violence. As such, it can appear in form similar to counterspeech, and understanding of the wider context, inaccessible to a machine, is needed for identifying it as disinformation.[80]

How far are the EU and UK ready to meet this challenge?

The UK and the EU are both preparing to implement greater regulation of digital platforms, to try to tackle many forms of online harms.[81] New legislation is a positive first step – but cannot prevent gendered disinformation on its own.

The UK Online Safety proposals, published this year, have potential: they will implement a regulatory framework which enables greater scrutiny of platforms’ systems and processes. This may help to reduce the enforcement gap that exists both on action being taken on illegal abuse online and on ‘legal but harmful’ abuse,[82] by holding platforms to account for being clear and consistent in enforcing the processes they have for curating and removing such content.

However, there remains concerns about its efficacy. Firstly, only a select few platforms will have responsibility to make systematic changes on ‘legal but harmful’ content – the category gendered disinformation is likely to often fall into – and many of the more radical forums risk being out of scope.[83] The changes even the largest platforms will be expected to make are primarily to enforce clear terms of service, rather than to take proactive measures to curb gendered disinformation.

Secondly, the proposals seek to protect content of ‘democratic importance’ – content which relates to a current political debate, which platforms may have a higher duty to protect from takedown. Gendered disinformation frequently appears to be related to political issues, such as women’s political decisions, meaning that it could end up with special protection from removal.

In Poland, government legislation cannot be the solution. Human rights activists in Poland[84] and around the world are vocal in condemning gendered disinformation, but the disinformation serves the interests of the ruling party. Indeed, in January Poland proposed a new law which would fine social media platforms if they removed any content that was legal under Polish law or banned users who posted it.[85]

When the EU brings in the Digital Services Act, there is the potential that this will include obligations on platforms to make changes to their systems to reduce the spread of content that could include violent content such as gendered disinformation.[86] However, these will need to be substantive obligations to reduce gendered disinformation – as it stands, only illegal content is likely to be in scope, restricting the degree to which meaningful action can be taken.

The recently published guidance on strengthening the EU Code of Practice on Disinformation is more promising.[87] The expansion of the focus from ‘verifiably false or misleading information’ to ‘the full range of manipulative techniques’ means that emotional, abusive or coded information that is used in gendered disinformation campaigns comes within scope.[88] What is required of platforms is also more robust: for instance, that signatories produce a ‘comprehensive list of manipulative tactics’ that are not permitted. Greater transparency on content curation, independent data access and pre-testing of design changes, all provide a crucial iterative knowledge base on which responses to gendered disinformation can be built. However, the guidance fails to acknowledge the disproportionate gendered impacts of many of the ‘manipulative techniques’ it describes (such as deepfakes), and risks overlooking the need for specific action to minimise the gendered weaponisation of these tactics.

There is a need for action to protect gender equality and freedoms at a more fundamental level. The EU and other international institutions should also resist the threat of the definitions of established rights for women and LGBT+ people being altered or changed through gendered disinformation campaigns.[89]

A Manifesto for Change

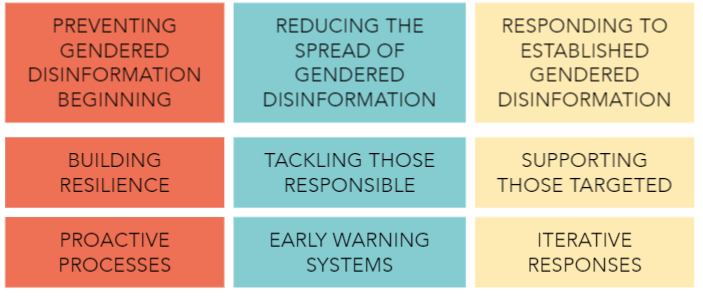

To reduce the impact of gendered disinformation, there are three stages interventions need to be targeted at: with progress on each reinforcing the others. Proposals to disarm gendered disinformation should identify how they will contribute to each of these factors:

We need to move from a reactive and narrow approach to a proactive and intersectional approach to gendered disinformation.[90][91] Digital hate and disinformation campaigns move across platforms, use coded language and incorporate both formal and informal networks, meaning they can quickly evolve in response to reactive measures.[92] Though platforms should continue to develop and invest in empowering and supporting users in controlling their online experiences and reporting abuse or harassment[93],[94], responses to gendered disinformation cannot be limited only to these measures.[95]

This means identifying the factors which increase the risk of different forms of gendered disinformation in different contexts and taking steps to minimise them, rather than waiting for campaigns to occur and then trying to retrofit an effective counterattack. It means moving from a model where women shoulder the burden of reporting individual attackers to one where information environments are redesigned to reduce the risk of gendered disinformation.[96]

Policymakers, platforms and civil society need to create early warning systems through which gendered disinformation campaigns can be reported and identified. Initiatives such as the EU Rapid Alert System which enables rapid coordinated international responses from governments and platforms in the face of an evolving threat of disinformation could be deployed in response to newly identified gendered disinformation campaigns.[97] However, as gendered disinformation is a tactic used by authoritarian states, we cannot rely simply on governments to bring about change.[98]

Below is a compilation of actions that can be taken by different stakeholders to proactively reduce the incidence, spread and impact of gendered disinformation. Together, these make up a manifesto for change: to better protect individuals, democracy, security and human rights.

PREVENT: how do we make gendered disinformation less likely to occur?

Governments/international institutions

- Maintain and stand up for established definitions of rights and liberties for women and LGBT+ people which are at risk of becoming weaponised[99]

- Legislate to ensure that platforms are meeting certain design standards, ensuring a gender sensitive lens[100]

- Support programming to combat gender inequality and gendered oppression[101]

- Members of political parties should ensure that campaigning does not exacerbate gendered stereotypes or attacks other candidates in gendered ways[102]

- Introduce standards for political campaigning and politicians to uphold which are gender-sensitive[103]

- Establish gender equality committees to assess legislation for any potential discriminatory effects[104]

- Ringfence a portion of taxes levied on digital services to fund initiatives to combat online gender-based violence[105]

Platforms

- Test how design choices affect the incidence of gendered disinformation and make product decisions accordingly[106]

- Make data more easily available to researchers to support the tracking and identification of gendered disinformation[107]

Media

- Ensure reporting on people in public life is gender-sensitive and does not reinforce harmful stereotypes[108]

Civil Society

- Support greater visibility of digital allyship by men to create a cultural shift of non-tolerance of abuse in online communities[109]

- Engage with stakeholders internationally to build research and understanding of gendered disinformation[110],[111],[112]

- Ensure research on disinformation and hate online is carried out with an intersectional gender lens

- Increase research and investigation into gendered disinformation in the Global South to balance the bias in research focusing on the Global North [113]

REDUCE: how do we make gendered disinformation less able to spread?

Governments/international institutions

- Build early warning systems as exist to tackle other increasing risks of violence[114]

- Invest in digital and media literacy initiatives[115]

- Recognise that the protection of rights online, including the protection of freedom of expression, requires action against gendered disinformation[116]

- Ensure legislation is flexible enough to dynamically respond to evolving technologies and threats[117]

- Introduce independent oversight of what changes platforms are making and how they are responding to gendered disinformation campaigns[118]

- Require platforms to provide comprehensive psychological support for human moderators[119]

- Introduce measures to disrupt platform’s business models of profiting from gendered disinformation (such as making tax breaks dependent upon platforms’ meeting certain standards of content curation and harm prevention)[120]

Platforms

- Improve processes for running ongoing threat assessments and early warning systems, engaging with local experts[121],[122],[123],[124],[125],[126]

- Facilitate the reporting of multiple posts together[127]

- Empower users to provide context for moderators to review when submitting reports of abuse or harassment[128]

- Improve content curation systems: make changes to content curation systems in order to demote gendered disinformation and to promote counter messaging[129],[130],[131],[132],[133],[134]

- Strengthen terms of service to clearly define different forms of online violence, prohibit gendered disinformation and enforce those terms consistently[135],[136]

- Improve content moderation systems: ensure enforcement by increasing and supporting human moderation[137],[138]

- Employ an iterative reviewing and updating of moderation tools and practices in consultation with experts who understand the relevant context[139],[140] , and update moderation processes rapidly in response to new campaigns[141],[142],[143],[144].

- Share data on how different interventions affect the spread of disinformation and misinformation on their services[145]

- Offer users more information and powers to control responses when their content is being widely shared, and enable them to share these powers with ‘trusted contacts’[146]

Media

- Support digital and media literacy initiatives[147]

- Reporting on gendered disinformation should clearly call it out, and avoid amplifying otherwise fringe narratives [148],[149]

Civil Society

- Engage in counter messaging to promote gender equality as a key tenet of the ideals being weaponised, such as national identity[150]

- Work to identify how international human rights law can be applied by private companies in content moderation decisions[151]

- Coordinate internationally to raise the alarm of new gendered disinformation campaigns and provide workable solutions[152],[153],[154]

RESPOND: how do we lessen the harmful impacts of gendered disinformation?

Governments/international institutions

- Invest in programming which supports people in public life who are targeted by gendered disinformation

- Invest in education for law enforcement on how online abuse works and how they should respond to reports of threats or abuse[155]

Platforms

- Make the process of reporting gendered disinformation clearer and more transparent and include greater support and resources for its targets[156]

- Ensure information and tools for users are explained in clear and accessible language[157]

- Increase user powers to block or restrict content or how other users can contact them or interact with their posts[158],[159]

- Invest in systems which respond rapidly to remove accounts or content, including in private channels[160]

- Introduce automated and human moderation systems specifically designed to identify likely incitements to violence in response to a specific campaign or person’s speech[161]

Media

- Engage with platforms to support fact-checking initiatives to discredit gendered disinformation campaigns

Civil Society

- Carry out research longitudinally to track the long term financial and political impacts of disinformation and chilling effects[162],[163],[164]

- Support people targeted by gendered disinformation through digital safety trainings[165]

- Avoid framing solutions to gendered disinformation as being the target’s job to manage (e.g. by simply ‘not engaging’)[166]

This work was supported by:

With thanks to Mandu Reid, leader of the Women’s Equality Party in the UK, Eliza Rutynowska, a lawyer at the Civil Development Forum (FOR) in Poland, and Marianna Spring, a specialist reporter covering disinformation and social media at the BBC, for their input.

—

References

[1] https://www.brookings.edu/techstream/gendered-disinformation-is-a-national-security-problem/; https://demos.co.uk/project/engendering-hate-the-contours-of-state-aligned-gendered-disinformation-online/

[2] https://demos.co.uk/project/engendering-hate-the-contours-of-state-aligned-gendered-disinformation-online/

[3] https://www.disinfo.eu/publications/misogyny-and-misinformation:-an-analysis-of-gendered-disinformation-tactics-during-the-covid-19-pandemic/; https://demos.co.uk/project/engendering-hate-the-contours-of-state-aligned-gendered-disinformation-online/; https://www.beautiful.ai/player/-MSj9MbXLfaRfSreqNeL/Womens-Disinfo-KW; https://speier.house.gov/_cache/files/6/c/6c8eec9e-eadf-4aac-a416-3859703eefc4/802A6A022C05E16123E4EAF4B0BE5BBF.gender-disinformation-letter-to-facebook-final-formatted-2.pdf

[4] https://demos.co.uk/project/warring-songs-information-operations-in-the-digital-age/; https://demos.co.uk/project/engendering-hate-the-contours-of-state-aligned-gendered-disinformation-online/

[5] https://scholarworks.umass.edu/cgi/viewcontent.cgi?article=1075&context=communication_faculty_pubs

[6] https://www.brookings.edu/techstream/gendered-disinformation-is-a-national-security-problem/

[7] https://www.washingtonpost.com/opinions/2021/06/07/how-authoritarians-use-gender-weapon/?utm_medium=social&utm_campaign=wp_opinions&utm_source=twitter

[8] See https://protectionapproaches.org/identity-based-violence for an explanation of identity based violence

[9] Interview with Mandu Reid

[10] https://www.nytimes.com/2021/06/03/us/disinformation-online-attacks-female-politicians.html

[11] https://demos.co.uk/wp-content/uploads/2023/03/Engendering-Hate-Report-FINAL.pdf

[12] https://www.unwomen.org/-/media/headquarters/attachments/sections/csw/65/egm/di%20meco_online%20threats_ep8_egmcsw65.pdf?la=en&vs=1511

[13] Interview with Marianna Spring

[14] https://demos.co.uk/wp-content/uploads/2023/03/Engendering-Hate-Report-FINAL.pdf; https://www.wilsoncenter.org/publication/malign-creativity-how-gender-sex-and-lies-are-weaponized-against-women-online; https://policyblog.stir.ac.uk/2020/03/23/gendered-misinformation-online-violence-against-women-in-politics-capturing-legal-responsibility/, https://mediawell.ssrc.org/expert-reflections/disinformation-democracy-and-the-social-costs-of-identity-based-attacks-online/

[15] Interview with Mandu Reid

[16] https://genderit.org/feminist-talk/approaching-fight-against-autocracy-feminist-principles-freedom; interview with Mandu Reid

[17] https://www.bbc.co.uk/news/election-2019-50246969

[18] https://www.bbc.co.uk/news/election-2019-50246969

[19] https://www.theguardian.com/politics/2019/oct/18/violent-threats-against-mps-commonplace-report-warns

[20] https://www.bbc.co.uk/news/uk-politics-49247808

[21] Interview with Mandu Reid

[22] Interview with Mandu Reid

[23] https://www.amnesty.org.uk/press-releases/uk-online-abuse-against-black-women-mps-chilling; interview with Mandu Reid

[24] https://mediawell.ssrc.org/expert-reflections/disinformation-democracy-and-the-social-costs-of-identity-based-attacks-online/

[25] https://thesentinelproject.org/2021/05/31/gendering-misinformation-management-preliminary-results-from-our-baseline-surveys/

[26] https://www.washingtonpost.com/opinions/2021/06/07/how-authoritarians-use-gender-weapon/?utm_medium=social&utm_campaign=wp_opinions&utm_source=twitter

[27]https://www.codastory.com/authoritarian-tech/pakistan-harassment-journalists/

[28] https://www.washingtonpost.com/opinions/2021/06/07/how-authoritarians-use-gender-weapon/?utm_medium=social&utm_campaign=wp_opinions&utm_source=twitter

[29] https://www.opendemocracy.net/en/5050/chicago-kiev-women-send-solidarity-poland-after-abortion-ban/

[30] https://www.vice.com/en/article/qj8pqv/poland-far-right-lgbtq-law-and-justice-decade-of-hate

[31] https://coinform.eu/gendered-misinformation-online-violence-against-women-in-politics-capturing-legal-responsibility/

[32] https://mediawell.ssrc.org/expert-reflections/disinformation-democracy-and-the-social-costs-of-identity-based-attacks-online/

[33] Interview with Mandu Reid

[34] https://www.nytimes.com/2021/06/03/us/disinformation-online-attacks-female-politicians.html

[35] https://www.thenationalnews.com/world/far-right-still-a-growing-threat-five-years-after-uk-politician-jo-cox-s-murder-by-neo-nazi-1.1242642; https://www.thenationalnews.com/world/europe/hope-not-hate-far-right-threat-to-bounce-back-in-2021-1.1187993

[36] https://www.theguardian.com/uk-news/2016/nov/23/thomas-mair-slow-burning-hatred-led-to-jo-cox-murder

[37] https://blogs.lse.ac.uk/politicsandpolicy/politics-as-usual-rising-violence-against-female-politicians-threatens-democracy-itself/

[38] https://www.theguardian.com/technology/2016/jun/18/vile-online-abuse-against-women-mps-needs-to-be-challenged-now

[39] https://www.theguardian.com/global-development/2021/mar/09/afghanistan-broadcaster-women-killed-isis

[40] https://rioonwatch.org/?p=63454; https://piaui.folha.uol.com.br/lupa/2019/03/25/artigo-fake-news-marielle/

[41] https://demtech.oii.ox.ac.uk/wp-content/uploads/sites/127/2021/03/Case-Studies_FINAL.pdf

[42] https://www.theguardian.com/commentisfree/2020/jun/22/jair-bolsonaro-fake-news-accusation-marielle-franco

[43] https://www.brookings.edu/techstream/gendered-disinformation-is-a-national-security-problem/

[44] https://dangerousspeech.org/to-keep-social-media-from-inciting-violence-focus-on-responses-to-posts-more-than-the-posts-themselves/

[45] https://www.academia.edu/905194/Genocidal_Language_Games; Interview with Eliza Rutynowska

[46] https://mediawell.ssrc.org/literature-reviews/hate-speech-information-disorder-and-conflict/versions/1-0/

[47] See https://protectionapproaches.org/identity-based-violence for an explanation of identity based violence

[48] https://mediawell.ssrc.org/expert-reflections/disinformation-democracy-and-the-social-costs-of-identity-based-attacks-online/

[49] https://www.disinfo.eu/publications/misogyny-and-misinformation:-an-analysis-of-gendered-disinformation-tactics-during-the-covid-19-pandemic/

[50] https://www.nytimes.com/2021/06/03/us/disinformation-online-attacks-female-politicians.html

[51] https://www.disinfo.eu/publications/misogyny-and-misinformation:-an-analysis-of-gendered-disinformation-tactics-during-the-covid-19-pandemic/

[52] https://www.disinfo.eu/publications/misogyny-and-misinformation:-an-analysis-of-gendered-disinformation-tactics-during-the-covid-19-pandemic/; https://demos.co.uk/wp-content/uploads/2023/03/Engendering-Hate-Report-FINAL.pdf; https://www.beautiful.ai/player/-MSj9MbXLfaRfSreqNeL/Womens-Disinfo-KW

[53] Interview with Eliza Rutynowska

[54] Interview with Eliza Rutynowska

[55] https://www.hrw.org/news/2021/03/31/poland-escalating-threats-women-activists

[56] https://demos.co.uk/wp-content/uploads/2020/10/Engendering-Hate-Report-FINAL.pdf

[57] https://www.euronews.com/2020/06/15/polish-president-says-lgbt-ideology-is-worse-than-communism; Interview with Eliza Rutynowska

[58] https://notesfrompoland.com/2021/06/23/lgbt-deviants-dont-have-same-rights-as-normal-people-says-polish-education-minister/; Interview with Eliza Rutynowska

[59] https://www.bbc.co.uk/news/stories-54191344

[60] https://www.reuters.com/article/us-poland-lgbt-europe-trfn-idUSKBN2AA20S

[61] https://www.bbc.co.uk/news/world-europe-53673411

[62] https://www.hrw.org/news/2021/03/31/poland-escalating-threats-women-activists

[63] https://www.hrw.org/news/2021/03/31/poland-escalating-threats-women-activists

[64] https://www.amnesty.org.uk/press-releases/uk-online-abuse-against-black-women-mps-chilling

[65] A recent study which looked at abuse directed at international women politicians, including the UK Home Secretary, did not find evidence of gendered disinformation campaigns against her – though found more work was needed to establish the reason for this and what it indicates about gendered disinformation in the UK more widely. This may indicate that high-profile and sophisticatedly coordinated gendered disinformation campaigns are currently less common in the UK. However, there are multiple instances of gendered disinformation being used against women politicians in the UK over many years. – see https://www.wilsoncenter.org/publication/malign-creativity-how-gender-sex-and-lies-are-weaponized-against-women-online

[66] https://www.theguardian.com/politics/2019/oct/06/stella-creasy-anti-abortion-group-police-investigate-extremist-targeting; https://www.theguardian.com/world/2019/oct/03/london-council-orders-anti-abortion-activists-to-remove-foetus-poster

[67] See also https://inews.co.uk/news/politics/dawn-butler-video-police-stopped-labour-mp-east-london-conspiracy-theories-575460

https://firstdraftnews.org/latest/uk-general-election-2019-the-false-misleading-and-suspicious-claims-crosscheck-uncovered-in-the-first-week/

[68] https://firstdraftnews.org/articles/uk-general-election-2019-the-false-misleading-and-suspicious-claims-crosscheck-uncovered-in-the-first-week/

[69] As well as a satirical article going viral and being taken as authentic claiming that there was ‘harrowing’ footage of her killing squirrels https://www.independent.co.uk/news/uk/politics/jo-swinson-squirrels-shooting-stones-lib-dems-slingshot-fake-news-a9209196.html

[70] Interview with Marianna Spring

[71] Interview with Mandu Reid

[72]https://www.wilsoncenter.org/sites/default/files/media/uploads/documents/Report%20Malign%20Creativity%20How%20Gender%2C%20Sex%2C%20and%20Lies%20are%20Weaponized%20Against%20Women%20Online_0.pdf

[73] Engendering Hate: The contours of state aligned gendered disinformation online

[74]https://www.wilsoncenter.org/sites/default/files/media/uploads/documents/Report%20Malign%20Creativity%20How%20Gender%2C%20Sex%2C%20and%20Lies%20are%20Weaponized%20Against%20Women%20Online_0.pdf;

Engendering Hate: The contours of state aligned gendered disinformation online

[75] https://www.theguardian.com/technology/2018/aug/16/facebook-myanmar-failure-blundering-toddler

[76]https://www.wilsoncenter.org/sites/default/files/media/uploads/documents/Report%20Malign%20Creativity%20How%20Gender%2C%20Sex%2C%20and%20Lies%20are%20Weaponized%20Against%20Women%20Online_0.pdf

[77] https://www.wired.com/story/online-harassment-toward-women-getting-more-insidious/

;https://www.mediasupport.org/blogpost/digital-misogyny-why-gendered-disinformation-undermines-democracy/

[78]https://www.wilsoncenter.org/sites/default/files/media/uploads/documents/Report%20Malign%20Creativity%20How%20Gender%2C%20Sex%2C%20and%20Lies%20are%20Weaponized%20Against%20Women%20Online_0.pdf

[79]https://www.wilsoncenter.org/sites/default/files/media/uploads/documents/Report%20Malign%20Creativity%20How%20Gender%2C%20Sex%2C%20and%20Lies%20are%20Weaponized%20Against%20Women%20Online_0.pdf; Engendering Hate: The contours of state aligned gendered disinformation online

[80] Engendering Hate: The contours of state aligned gendered disinformation online

[81] https://www.gov.uk/government/news/landmark-laws-to-keep-children-safe-stop-racial-hate-and-protect-democracy-online-published

[82] Interviews with Mandu Reid and Marianna Spring

[83] https://graziadaily.co.uk/life/in-the-news/online-safety-bill-major-concerns/

[84] https://notesfrompoland.com/2020/10/30/the-symbols-of-polands-abortion-protests-explained/

[85] bbc.co.uk/news/technology-55678502

[86] https://ec.europa.eu/commission/presscorner/detail/en/QANDA_20_2348

[87] https://digital-strategy.ec.europa.eu/en/library/guidance-strengthening-code-practice-disinformation

[88] https://digital-strategy.ec.europa.eu/en/policies/code-practice-disinformation

[89] Interview with Eliza Rutynowska

[90] https://carnegieendowment.org/2020/11/30/tackling-online-abuse-and-disinformation-targeting-women-in-politics-pub-83331

[91] https://counteringdisinformation.org/topics/gender/2-current-and-promising-approaches#currentapproaches

[92] https://mediawell.ssrc.org/literature-reviews/hate-speech-information-disorder-and-conflict/versions/1-0/

[93] https://webfoundation.org/2021/07/generation-equality-letter/

[94] https://www.dailydot.com/debug/tech-companies-commitment-fight-online-gender-abuse/

[95] https://weareultraviolet.org/putting-the-onus-on-women-is-a-pr-stunt-the-platforms-are-the-problem-2/

[96] https://mediawell.ssrc.org/expert-reflections/disinformation-democracy-and-the-social-costs-of-identity-based-attacks-online/, Interview with Marianna Spring

[97] https://www.euractiv.com/section/digital/news/eu-alert-triggered-after-coronavirus-disinformation-campaign/

[98] https://www.washingtonpost.com/opinions/2021/06/07/how-authoritarians-use-gender-weapon/?utm_medium=social&utm_campaign=wp_opinions&utm_source=twitter, Interview with Eliza Rutynowska

[99] Interview with Eliza Rutynowska

[100] https://www.brookings.edu/techstream/gendered-disinformation-is-a-national-security-problem/

[101] https://assets.publishing.service.gov.uk/government/uploads/system/uploads/attachment_data/file/866353/Quick_Read-Gender_and_countering_disinformation.pdf

[102] https://www.beautiful.ai/player/-MSj9MbXLfaRfSreqNeL/Womens-Disinfo-KW

[103] https://issuu.com/migsinstitute/docs/whitepaper_final_version.docx

[104] https://www.washingtonpost.com/opinions/2021/06/07/how-authoritarians-use-gender-weapon/?utm_medium=social&utm_campaign=wp_opinions&utm_source=twitter

[105] https://glitchcharity.co.uk/tech-tax-campaign/

[106] https://digital-strategy.ec.europa.eu/en/library/guidance-strengthening-code-practice-disinformation

[107] https://digital-strategy.ec.europa.eu/en/library/guidance-strengthening-code-practice-disinformation

[108] https://weareultraviolet.org/wp-content/uploads/2021/01/uv-vp-reporting-styleguide-v8.pdf

[109] Interview with Mandu Reid

[110] https://www.brookings.edu/techstream/gendered-disinformation-is-a-national-security-problem/

[111] https://www.unwomen.org/-/media/headquarters/attachments/sections/csw/65/egm/di%20meco_online%20threats_ep8_egmcsw65.pdf?la=en&vs=1511

[112] https://www.mediasupport.org/news/gendered-disinformation-and-what-can-be-done-to-counter-it/

[113] https://mediawell.ssrc.org/literature-reviews/hate-speech-information-disorder-and-conflict/versions/1-0/

[114] https://earlywarningproject.ushmm.org/about

[115] https://www.beautiful.ai/player/-MSj9MbXLfaRfSreqNeL/Womens-Disinfo-KW

[116] https://www.leaf.ca/wp-content/uploads/2021/04/Full-Report-Deplatforming-Misogyny.pdf

[117] Interview with Mandu Reid

[118] https://www.brookings.edu/techstream/gendered-disinformation-is-a-national-security-problem/

[119] https://www.foxglove.org.uk/news/open-letter-from-content-moderators-re-pandemic

[120] https://issuu.com/migsinstitute/docs/whitepaper_final_version.docx, interview with Mandu Reid.

[121] https://www.beautiful.ai/player/-MSj9MbXLfaRfSreqNeL/Womens-Disinfo-KW

[122] https://carnegieendowment.org/2020/11/30/tackling-online-abuse-and-disinformation-targeting-women-in-politics-pub-83331

[123] https://counteringdisinformation.org/topics/gender/2-current-and-promising-approaches#currentapproaches

[124] https://docs.wixstatic.com/ugd/f4d9b9_ce178075e9654b719ec2b4815290f00f.pdf

[125] https://counteringdisinformation.org/topics/gender/2-current-and-promising-approaches#currentapproaches

[126] https://counteringdisinformation.org/topics/gender/2-current-and-promising-approaches#currentapproaches

[127] https://www.mediasupport.org/blogpost/digital-misogyny-why-gendered-disinformation-undermines-democracy/

[128] https://uploads-ssl.webflow.com/6059db55178602abe7e34c9c/60dcf9fa401ea0315e802b33_OGBV_Report_June2021.pdf

[129] https://speier.house.gov/_cache/files/6/c/6c8eec9e-eadf-4aac-a416-3859703eefc4/802A6A022C05E16123E4EAF4B0BE5BBF.gender-disinformation-letter-to-facebook-final-formatted-2.pdf

[130] https://www.mediasupport.org/blogpost/digital-misogyny-why-gendered-disinformation-undermines-democracy/

[131] https://www.ripoti.africa/site/index

[132] https://www.wired.com/story/online-harassment-toward-women-getting-more-insidious/

[133] https://www.beautiful.ai/player/-MSj9MbXLfaRfSreqNeL/Womens-Disinfo-KW; Interview with Marianna Spring

[134] https://carnegieendowment.org/2020/11/30/tackling-online-abuse-and-disinformation-targeting-women-in-politics-pub-83331

[135] https://webfoundation.org/2020/11/the-impact-of-online-gender-based-violence-on-women-in-public-life/

[136] https://www.wired.com/story/online-harassment-toward-women-getting-more-insidious/%20https://www.wilsoncenter.org/sites/default/files/media/uploads/documents/Report%20Malign%20Creativity%20How%20Gender%2C%20Sex%2C%20and%20Lies%20are%20Weaponized%20Against%20Women%20Online_0.pdf

[137] https://demos.co.uk/wp-content/uploads/2023/03/Engendering-Hate-Report-FINAL.pdf

[138] https://docs.wixstatic.com/ugd/f4d9b9_ce178075e9654b719ec2b4815290f00f.pdf

https://demos.co.uk/wp-content/uploads/2023/03/Engendering-Hate-Report-FINAL.pdf[139

[140] https://docs.wixstatic.com/ugd/f4d9b9_ce178075e9654b719ec2b4815290f00f.pdf

[141] https://webfoundation.org/2020/11/the-impact-of-online-gender-based-violence-on-women-in-public-life/

[142] https://docs.wixstatic.com/ugd/f4d9b9_ce178075e9654b719ec2b4815290f00f.pdf

[143]https://www.wilsoncenter.org/sites/default/files/media/uploads/documents/Report%20Malign%20Creativity%20How%20Gender%2C%20Sex%2C%20and%20Lies%20are%20Weaponized%20Against%20Women%20Online_0.pdf

[144] https://speier.house.gov/_cache/files/6/c/6c8eec9e-eadf-4aac-a416-3859703eefc4/802A6A022C05E16123E4EAF4B0BE5BBF.gender-disinformation-letter-to-facebook-final-formatted-2.pdf

[145] Interview with Mandu Reid

[146] https://uploads-ssl.webflow.com/6059db55178602abe7e34c9c/60dcf9fa401ea0315e802b33_OGBV_Report_June2021.pdf

[147] https://www.beautiful.ai/player/-MSj9MbXLfaRfSreqNeL/Womens-Disinfo-KW

[148] https://docs.wixstatic.com/ugd/f4d9b9_ce178075e9654b719ec2b4815290f00f.pdf

[149] https://weareultraviolet.org/wp-content/uploads/2021/01/uv-vp-reporting-styleguide-v8.pdf

[150] https://www.washingtonpost.com/opinions/2021/06/07/how-authoritarians-use-gender-weapon/?utm_medium=social&utm_campaign=wp_opinions&utm_source=twitter

[151] https://items.ssrc.org/disinformation-democracy-and-conflict-prevention/trump-in-the-rearview-mirror-how-to-better-regulate-violence-inciting-content-online/

[152] https://www.brookings.edu/techstream/gendered-disinformation-is-a-national-security-problem/

[153] https://www.unwomen.org/-/media/headquarters/attachments/sections/csw/65/egm/di%20meco_online%20threats_ep8_egmcsw65.pdf?la=en&vs=1511

[154] https://www.mediasupport.org/news/gendered-disinformation-and-what-can-be-done-to-counter-it/

[155] Interview with Marianna Spring

[156] https://uploads-ssl.webflow.com/6059db55178602abe7e34c9c/60dcf9fa401ea0315e802b33_OGBV_Report_June2021.pdf

[157] https://uploads-ssl.webflow.com/6059db55178602abe7e34c9c/60dcf9fa401ea0315e802b33_OGBV_Report_June2021.pdf

[158] https://www.beautiful.ai/player/-MSj9MbXLfaRfSreqNeL/Womens-Disinfo-KW; Interview with Marianna Spring

[159] https://carnegieendowment.org/2020/11/30/tackling-online-abuse-and-disinformation-targeting-women-in-politics-pub-83331

[160] Interview with Marianna Spring

[161] https://dangerousspeech.org/to-keep-social-media-from-inciting-violence-focus-on-responses-to-posts-more-than-the-posts-themselves/

[162] https://www.mediasupport.org/news/gendered-disinformation-and-what-can-be-done-to-counter-it/; Interview with Mandu Reid

[163] https://www.mediasupport.org/blogpost/digital-misogyny-why-gendered-disinformation-undermines-democracy/

[164]https://assets.publishing.service.gov.uk/government/uploads/system/uploads/attachment_data/file/866351/How_to_Guide_on_Gender_and_Strategic_Communication_in_Conflict_and_Stabilisation_Contexts_-_January_2020_-_Stabilisation_Unit.pdf

[165]https://glitchcharity.co.uk/wp-content/uploads/2021/04/Dealing-with-digital-threats-to-democracy-PDF-FINAL-1.pdf

[166] Interview with Marianna Spring